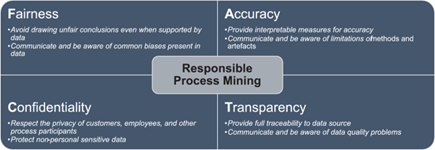

Responsible Process Mining as a topic is introduced in [1]:

“The prospect of data misuses negatively affecting our life has led to the concept of responsible data science. It advocates for responsibility to be built, by design, into data management, data analysis, and algorithmic decision-making techniques such that it is made difficult or even impossible to cause harm intentionally or unintentionally. Process mining techniques are no exception to this and may be misused and lead to harm. Decisions based on process mining may lead to unfair decisions causing harm to people by amplifying the biases encoded in the data by disregarding infrequently observed or minority cases. Insights obtained may lead to inaccurate conclusions due to failing to consider the quality of the input event data. Confidential or personal information on process stakeholders may be leaked as the precise work behavior of an employee can be revealed. Process mining models are usually white box but may still be difficult to interpret correctly without expert knowledge hampering the transparency of the analysis.”

Problem

Confidentiality includes respecting the privacy of individuals connected to the process that is being analyzed. Event logs contain sequential data (traces of events) and often traces are related to individuals, e.g., in a loan application process a trace may include all recorded events for a single customer. Also, individual events may be related to an individual, e.g., the employee who performed a certain activity. Further details can be read in [2, 4]. The analysis of a process should be about the process and not about work surveillance of individual employees. Disregarding privacy can lead to acceptance problems (e.g., works councils rejecting certain analyses) or fines due to privacy regulations (e.g., GDPR).

Privacy-preserving process mining methods try to mitigate some of these risks by providing guarantees under certain assumptions that it is impossible (or unlikely) that the connection between individual traces or events and the related individuals can be made. Assumptions are often related to the background knowledge an attacker is assumed to have. A good overview of how this can be conceptualized is given in [6].

Many such methods work by somehow changing the (to be protected) source event log and produce a protected event log in which events are modified. Guarantees provided can be based on (statistical) indistinguishability notions such as differential privacy [5] or group-based notions such as k-anonymity [6]. However, many more protection scenarios are possible. For example, in [3] the disclosure risk of publishing a (discovered) process model with frequencies is investigated. Also, the nature of events could be taken into account. Notifications in a loan application process may be much less critical compared to events based on sensor data of human movement in a smart home or industrial production scenario or data in task mining capturing the actions or movements performed on a computer screen.

Proposed contribution

The research ambition for this project is: “How can we build responsible process mining systems that respect the privacy of stakeholders while still providing useful and trustworthy insights?”. So far, the proposed privacy-preserving process mining methods have largely been developed to target event logs. An event log is taken as input and another event log is created (as in [6]) or certain abstractions (e.g., directly-follows relations) can be queried from a protected event log (as in [5]). However, a process analyst may only be using aggregated information from the event log such as a process model with frequencies or probabilities. Intuitively such aggregation would already hide some of the information about individuals (as shown in [3]). Can we quantify this and provide guarantees at the level of typical process mining outputs rather than at the level of the event log?

Contact

Felix Mannhardt, f.mannhardt@tue.nl